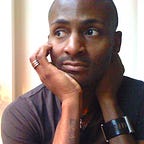

This Computer Scientist Built an App That Randomized His Life

What would happen if you gave up your free will?

Algorithms control more of our experiences than ever before. What we watch on Netflix, what we listen to on Spotify, what gets recommended to us on Instagram, all of these choices are governed by software designed to learn our preferences and feed us more of what we want. But…